By Neal | June 28, 2021

In 2017, Moxie Marlinspike and Trevor Perrin were awarded the The Levchin Prize for Real-World Cryptography for developing the Signal protocol. It’s a well-earned honor and I have no doubt that Signal is the best practical in-class encryption scheme for messaging that the cryptographic community knows about.

But, securing communication requires more than encryption. The sender also needs to make sure the public keys that they intend to use are the right keys for the intended recipients. This check is called authentication. If the sender uses the wrong public key then either the recipient won’t be able to read the message, or worse, an active attacker could intercept the message, and reencrypt it on the fly so that the connection appears to be secure when in fact someone is eavesdropping.

Attacking the authentication layer is not science fiction. In 2018, the UK government proposed Ghost. Ghost is supposed to be a compromise that would enable law enforcement to intercept messages without weakening encryption. The basic idea is that secure messengers like Signal would build a backdoor into the authentication layer so that law enforcement can add recipients to encrypted conversations without the participants noticing, and without compromising the encryption, which they say, is what people care about. Of course, this compromises message confidentiality, which is what people actually care about.

Signal’s default authentication mechanism relies on a single certification authority (CA), which they control. Since Signal also controls the messaging infrastructure, this places them in the perfect position to perform the machine-in-the-middle attack (MitM attack) described in the Ghost proposal. The only defense that Signal offers its users is for them to verify a conversation’s safety number. Yet, user studies by Schröder et al. and Vaziripour et al., for instance, have found that Signal’s authentication ceremony is nearly unusable in practice: the safety number verification workflow is hard to find, hard to use, and mostly misunderstood, even by experts.

Authentication is hard, because it can’t be completely automated. Somewhere along the line, a human has to confirm that, yes, that key really should be associated with that identity. But, we can do much better than Signal’s status quo without burdening most end users. We can simplify the UX for verifying Signal’s safety numbers. We can monitor CAs like Signal’s key server using tools like Certificate Transparency. We can cross check CAs run by independent organizations. And, we can selectively delegate authentication to people we trust, like the techie in our collective, or the system administrator at work.

Authentication in Signal

In contrast to Signal’s encryption algorithm, Signal’s default authentication scheme is weak. To authenticate a public key, the Signal app asks Signal’s key server for a user’s public key. That’s it. There are no further validity checks.

This scheme makes Signal’s key server a certification authority (CA). In fact, it is the only CA that the Signal app uses. And, since most users only use Signal’s default authentication mechanism, most users rely on it exclusively. Because Signal messages are also transferred via Signal’s infrastructure, Signal is in an ideal position to perform a machine-in-the-middle attack (MitM attack). This weakness undermines Signal’s strong encryption.

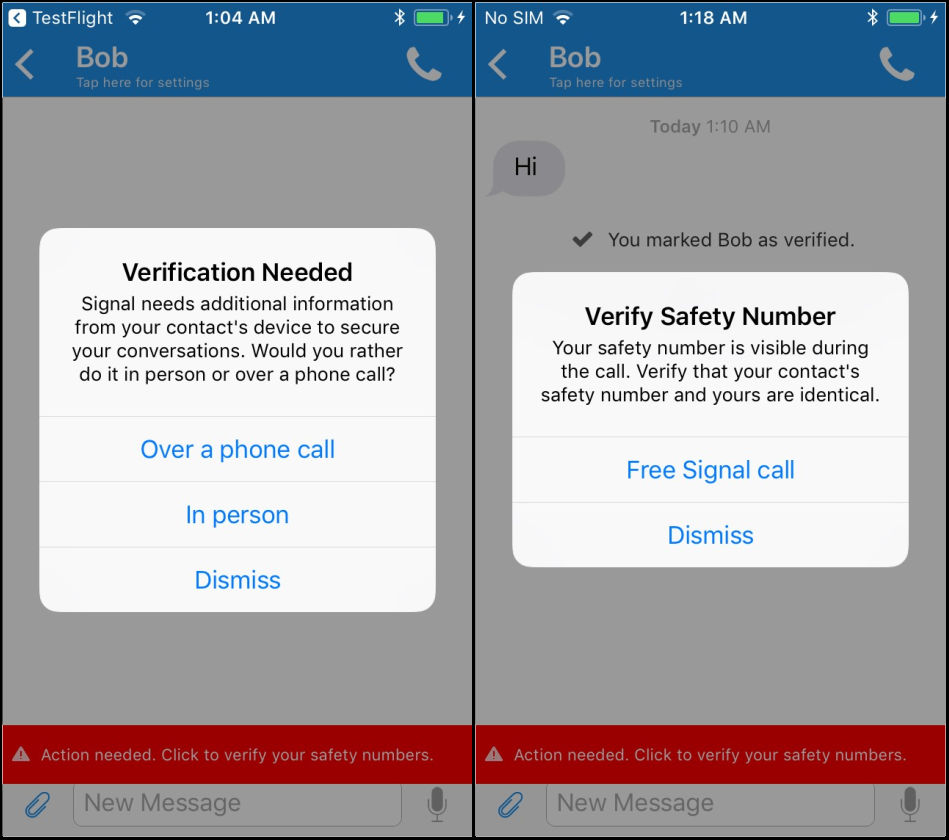

Signal also has a strong authentication mechanism based on a so-called authentication ceremony. The workflow is shown in the above figure. The user clicks on the menu, selects Conversation settings, scrolls to the bottom, taps on View safety number, compares the safety number, and finally taps on the Verified toggle, if the safety number matches.

In 2017, Vaziripour et al. published a usability study, “Is that you, Alice?”, on authentication ceremonies in secure messengers. The study was not specifically about Signal, but the messengers studied used comparable workflows. Each pair of participants in the study was told one of them needed to give their credit card number to the other participant using a particular messenger, and they should first verify that they were really communicating with their partner, and check that no one else could read their messages. The study found that only a small fraction of the participants, 14%, were able to figure out how to perform the authentication ceremony on their own. When users were taught about authentication ceremonies in general beforehand, 79% succeeded. But, it still took them 3.2 minutes to start the authentication ceremony and an additional 4.6 minutes to complete it, on average. Importantly, in the end, most participants still did not actually have a good understanding of what an authentication ceremony accomplishes. In particular, most participants only considered impersonation for which social cues (e.g., “what did we have for dinner last night?”) provide good protection, but they did not consider eavesdropping.

These results are corroborated by Schröder et al. in a similar user study from 2016, When Signal hits the Fan. In that user study, all participants were university students studying computer science and thus presumably more knowledgeable than normal users. The investigators examined Signal’s authentication ceremony and found that only 25% of the participants completed it successfully.

Due to a lack of awareness of machine-in-the-middle attacks, and the sense that phishing attacks can be detected using other mechanisms, the perceived utility of performing an authentication ceremony among normal users is presumably low. Indeed, in Dechand et al.’s 2019 study In Encryption We Don’t Trust, they found that the participants in their study don’t trust encryption to protect them:

[M]isconceptions about cryptography have lead to a lack of trust in encryption in general. The participants reported feeling vulnerable and simply assumed that they cannot protect themselves from attackers.

The amount of time it takes to perform the ceremony, and the fact that it is not integrated into users’ normal messaging workflow further raises the hurdle. Indeed, anecdotal evidence suggests that the authentication ceremony’s friction is so high that even sophisticated users rarely, if ever, perform it, and instead rely on Signal’s key server.

Signal’s Key Server, a Certification Authority

Signal’s default authentication mechanism uses Signal’s key server. Signal’s key server verifies a user’s identity and key by sending the user an SMS. The SMS includes a one-time password (OTP), which the Signal app sends back to Signal’s key server. That completes the verification. Relaying the OTP provides evidence that the user controls the phone number, makes it hard for someone to upload a fake key, and allows Signal to automatically delete outdated keys.

The Signal key server doesn’t actually verify the key owner’s identity. It verifies that the owner of the key controls a resource. This is similar to how PGP Global Directory and keys.openpgp.org work: they verify control of an email address. And it is similar to how Let’s Encrypt works: it verifies control of a domain. In Signal’s case, this resource is a telephone number. Although not trivial, hijacking a telephone number doesn’t require much sophistication.

When a user relies on Signal’s key server to return an authenticated key, they are treating it like a certification authority (CA). CAs are also used in X.509, the technology that is used to secure the web. The web is secured by a small collection of global CAs, and various monitoring systems like Certificate Transparency are in place to make sure they are well behaved.

Unlike the web, Signal is a walled garden. Signal controls the sole CA; users can’t configure the Signal app to use an alternative key server. In fact, Signal doesn’t even want alternative clients. And there is no external monitoring infrastructure. In Signal, everything is centralized; they have full control. And, according to Moxie, Signal’s closed nature is a virtue:

I understand that federation and defined protocols that third parties can develop clients for are great and important ideas, but unfortunately they no longer have a place in the modern world.

- Moxie MarlinspikeDespite being distributed, X.509 CAs have demonstrated again and again that users should not rely on them. Companies like Symantec have commercial interests, and want to spend as little money as possible to defend their users. And, governments like Turkey have authoritarian interests, and want to violate their citizens’ privacy and prevent them from exercising their human rights.

Signal has two redeeming qualities. Signal is run by a non-profit, and that non-profit has a history of defending its users. These are pretty good reasons to trust Signal. But, these are social, not technical reasons; the authentication problem remains open.

Other organizations have adopted Signal’s encryption scheme and extol its virtues. Facebook has integrated it into WhatsApp, and Microsoft has added it to Skype. These are big companies who have different interests from a non-profit like Signal, and a different track record defending their users. This weakness in the authentication protocol would allow these companies to undermine the encryption’s protection.

History has shown us over and over again that governments want to spy on their residents and will force companies to cooperate with them. And, Bill Gates, who is in an excellent position to understand what happens to a company when the government is unhappy with them, has all but called for companies to add backdoors to their cryptography. Gizmodo reports that Bill Gates said:

[C]ompanies need to be careful that they’re not … advocating things that would prevent government from being able to, under appropriate review, perform the type of functions that we’ve come to count on.

- Bill GatesWe must be careful to not oversell Signal’s encryption and thereby allow companies using the Signal encryption protocol to sneak a backdoor in some place else. Encryption is one piece of the puzzle. We need stronger authentication by default. We don’t just need end-to-end encryption, we need authenticated end-to-end encryption.

Attacking Signal’s Authentication

The US government has subpoenaed Signal for user data. Happily Signal collects so little data about their users, that they were only able to return a few scraps of information, which was probably useless.

A more sophisticated subpoena, like the Lavabit subpoena, in which the US government forced Lavabit to turn their private SSL keys over to the authorities, or the more recent Tutanota subpoena, in which the German government forced Tutanota to add a mechanism to intercept messages, could require Signal to install a backdoor.

Figure: Signal controls both the key server and the messaging server. This makes it easy for them to conduct a machine-in-the-middle attack.A possible tactic for the government is for it to force Signal to change their key server to return a different public key for a given phone number, and then reencrypt messages on the fly. As a first approximation, the attack works as follows. Let’s say the government wants information about Che. First, they force Signal to add their own key for Che to Signal’s key server. When someone, say Alberto, sends Che a message the first time, he will get the government’s key, and use that key to create a secure channel with Che.

If that is all that the government does, then the creation of the secure channel will fail, because Che doesn’t have the corresponding secret key. But, Signal also controls the messaging servers. So, the government can also force Signal to perform a machine-in-the-middle attack.

If Alberto and Che now use the secure channel to exchange messages, then Signal can provide the plaintext of all the messages to the government. Alberto and Che will only notice the machine-in-the-middle attack if they use Signal’s strong authentication mechanism—or when they are arrested. If Alberto contacts Che out of the blue to arrange a meeting, this is particularly hard, as they will not yet have a secure channel.

If someone, say, Fidel, has already communicated with Che, then when the governments’ keys are installed on Signal’s key server, Fidel’s client will attempt to create a new session, and show Fidel a warning that the safety number with Che has changed. Che should also see a warning. Given that people don’t understand the authentication ceremony, it is not a stretch to imagine that most users will ignore this warning either because they don’t understand it, or because they simply assume the other person got a new device.

This attack isn’t novel. The Signal developers understand the importance of strong authentication, and mention exactly this issue in the security considerations section of their session management specification:

Sesame relies on users to authenticate identity public keys with their correspondents. For example, a pair of users might compare public key fingerprints for all their devices manually, or by scanning a QR code. The details of authentication methods are outside the scope of this document.

If authentication is not performed, users receive no cryptographic guarantee as to who they are communicating with.

Intelligence agencies dream of deploying this attack as a matter of course. In 2018, the Technical Director of the National Cyber Security Centre, and the Technical Director for Cryptanalysis at GCHQ proposed Ghost. Ghost is a way for service providers to cooperate with law enforcement by undermining their authentication systems instead of building backdoors into the encryption algorithms so as to not violate their “commit[ment] to support[ing] strong encryption:”

In a world of encrypted services, a potential solution could be to go back a few decades. It’s relatively easy for a service provider to silently add a law enforcement participant to a group chat or call. The service provider usually controls the identity system and so really decides who’s who and which devices are involved – they’re usually involved in introducing the parties to a chat or call. You end up with everything still being end-to-end encrypted, but there’s an extra ‘end’ on this particular communication. …

That’s a very different proposition to discuss and you don’t even have to touch the encryption.

What GCHQ proposes is just a linguistic trick. Despite their claim that use of this backdoor will be constrained by courts, that it will “enable the majority of the necessary lawful access without undermining the values we all hold dear,” it is still a way to circumvent encryption. The backdoor will be abused just as any backdoor will be abused. And, it will be abused at the latest when the technology ends up in the hands of authoritarian governments, or is broken into. A broad coalition of civil society organizations, technology companies & trade associations, and security researches have written a more detailed rebuttal.

Strengthening Authentication

We’ve identified four modifications to Signal that we think can strengthen its authentication story. The Signal developers can make it easier to find the safety number verification screen, and improve the verification workflow. They can add mechanisms so that external parties can monitor Signal’s CA. They can support third-party CAs and crosscheck keys. And, they can add support for ad-hoc certifications thereby enabling organizations and users to use their own infrastructure to authenticate keys.

Improve the Safety Number Verification Workflow

In the previously mentioned study by Vaziripour et al., the authors found that the participants had a hard time finding and performing the authentication ceremony. Further, even after some general instruction, the participants didn’t really understand the ceremony’s purpose. They thought that it prevents phishing attacks, which, they correctly noted, can also be mitigated using social cues, and didn’t understand that it averts machine-in-the-middle attacks.

Figure: Screenshots of Vaziripour et al.'s modified authentication ceremony for Signal.In a followup paper, “Action Needed! Helping Users Find and Complete the Authentication Ceremony in Signal”, Vaziripour et al. designed and tested several modifications to Signal that made starting the authentication ceremony easier, and simplified the authentication ceremony itself. First, instead of requiring that users go out of their way to check a conversation’s safety numbers, they modified Signal to more closely integrate the authentication ceremony into the normal messaging workflow. Specifically, they added more prominent cues showing the conversation’s authentication status, and linked them to the authentication ceremony. Second, based on participants' feedback, they streamlined the existing QR-code-based ceremony, and they added a voice-based authentication ceremony, which users could use when they could not scan the QR code, because they are not physically together.

Their evaluation found that these changes resulted not only in a dramatic improvement in the study’s participants successfully authenticating their conversation partners, but it also significantly decreased the time it took them to complete the authentication ceremony. Interestingly, although the authors added some additional instructions to the authentication ceremonies, they did not try to make the participants have a better understanding of what they were doing; better comprehension was a secondary goal. But, because the participants were prompted by the system to perform the authentication ceremony, they did it anyway.

Facilitate External Monitoring

As previously described, the X.509 ecosystem has had repeated issues with incorrectly issued certifications. This was doubly problematic due to the large amount of time that would pass until the issues were identified and fixed. Certificate Transparency was created to improve this situation by using logging and monitoring to increase accountability.

The basic idea behind Certificate Transparency is two fold. First, CAs don’t just issue certifications, they also add them to a verifiable, public, append-only log. Second, external monitors and clients check the log. Monitors identify when a new certification is added for a particular identity. This allows the entity that controls the identity to promptly identify a misissued certification. When a client validates a certification, it can ensure that the certification appears in a Certificate Transparency log. If it doesn’t then the client can reject the certification based on the assumption that the entity that controls the identity didn’t have an opportunity to check it. By only accepting certifications that are present in the Certificate Transparency log, clients force CAs to participate in Certificate Transparency. And, the monitors, by watching the logs and publicizing errors, provide CAs a strong incentive to not only not be malicious, but also not be sloppy.

A more sophisticated version of Certificate Transparency is called CONIKS. Protonmail is working on using a variant of this to allow its users to monitor their own key server. Signal could provide a similar service.

Consider Third-Party CAs

A more aggressive step would be for Signal to allow third parties to run CAs, and then have the Signal app crosscheck certifications. If these CAs were run by different organizations in different jurisdictions, then it would be significantly harder for an attacker to replace a key: they would potentially have to compromise multiple organizations subject to different rules.

The CAs could potentially use different identifiers as well. Currently, Signal only uses telephone numbers. Some CAs could verify a user’s email address. Others could use social proofs. These additional factors would mitigate the aforementioned problem with SIM swapping.

Figure: A screenshot of the New York Time's Twitter profile linking to their contact information.Social proofs were pioneered by keybase.io and are now championed by keyoxide. A social proof is basically an attestation a user posts on their social media profile, and which is signed by their key. If Alice knows Bob’s Twitter handle, and Bob has published a social proof on Twitter, then she can find the social proof in his Twitter profile. Since only Alice or Twitter can modify the profile, the social proof demonstrates possession of the key. This fits nicely into social media, as many users also post their contact details there, as the screenshot shows. This effectively turns Twitter into a CA.

This has the nice effect of also solving a long-standing complaint that Signal requires phone numbers in order to communicate with other people. As Vice reports in an article about Signal, some people don’t want to give out their phone number:

As a woman, handing out my phone number to a stranger creates a moderate risk: What if he calls me in the middle of the night? What if he harasses me over SMS? What if I have to change my number to get away from him?

Support Ad-Hoc Certifications

Instead of only allowing a few select organizations to issue certifications, Signal could allow anyone to certify anyone else. To make use of this, users would need a way to configure the set of CAs that they are willing to rely on.

Because using this feature would require manual configuration, it might seem that users are unlikely to adopt it. But, this functionality is interesting for groups of activists and companies who want to delegate authentication to a more trusted entity like an in-house techie or system administrator. If done as part of the on-boarding process, then users don’t even have to do the configuration on their own. This feature can also be interesting for normal users. For instance, a business like a bank could send a QR code in their on-boarding correspondence. When the user scans it with Signal, Signal could prompt the user to add the scanned key as a CA for identifies associated with the bank (e.g., scoped to email addresses for

@bank.com).OpenPGP actually already has the mechanisms needed to realize this type of functionality. In particular, it has certification artifacts. CAs can delegate to other CAs. Certifications can be scoped, e.g., to a particular domain. And, it is even possible to partially trust a CA.

In OpenPGP, certifications are self-authenticating artifacts. By using artifacts that an OpenPGP implementation can verify rather than relying on the fact that an authenticated server returned an assertion, it is possible to pass the certifications around via an unsecured channel using other mechanisms like a gpg sync-style keylist. Further, since a key server does not always have to be queried to authenticate a binding, this can also increase users’ privacy.

In the OpenPGP world, an administrator can create and manage a CA by hand using

gpg. But, this is complicated and error prone and historically few organizations have done it. OpenPGP CA is a new tool that guides the administrator through the process of setting up an in-house CA. It automates the signing. Makes it easy to create bridges between organizations. And, it can publish the certificates and the certifications.Using a centralized CA in an organization firstly means that the work of doing the authentication is largely moved to the CA’s administrator. Instead of each member in an organization with

nmembers having to perform an authentication ceremony with the othern-1members (for a total of(n * (n-1))/2authentication ceremonies!), each member only has to perform one authentication ceremony with the administrator, for a total ofn-1authentication ceremonies. This can be part of the on-boarding process, which employees in a company often go through anyway. And, since the administrator is present, the individual members don’t actually need to understand what they are doing. Long-term this has the additional advantage that users don’t need to worry about maintaining their keyring when someone joins the organization or rotates their keys: the administrator will take care of it.To make the numbers more concrete, consider an organization with just

15members. Using Signal’s current approach, the members would have to do105authentication ceremonies, and still have to worry about keeping their authentication information up to date as the organization changes. Using a CA, they would have to do just14authentication ceremonies, and then everyone can authenticate everyone else.

Figure: Two OpenPGP CA-using organizations and various certifications that OpenPGP CA helps create. Using these certifications, Alice is able to authenticate a key for Bob.An administrator of an organization, say Alpha, can also authenticate people outside the organization. And, if there are a bunch of people from the same organization, say Beta, and they run their own CA, then the administrator of Alpha could certify the Beta CA as a CA. Then Alpha’s members would automatically use certifications from Beta’s CA to authenticate members of Beta. By itself, this is dangerous: it gives Beta a lot of power, which could be abused to trick users. This power can be limited by using scoping rules. For instance, in OpenPGP, it is possible for Alpha’s admin to indicate that certifications from Beta’s CA should only be respected for identities associated with Beta. This is illustrated in the above figure.

OpenPGP has another interesting authentication mechanism. Instead of completely trusting a certification from a CA, it is possible to partially trust it. This means if Alice partially trusts a CA, then she requires corroboration from other CAs. This mechanism is particularly useful when Alice uses CAs whose interests are insufficiently aligned with her own. By partially relying on multiple public CAs, for instance, Alice is able to protect herself from a single, or even multiple compromised CAs.

OpenPGP’s authentication mechanisms are expressive and flexible. And they have existed for decades. Unfortunately, they haven’t seen widespread use as the tooling has been difficult to use. However, that doesn’t mean that the mechanisms themselves are bad. It would be good to see Signal help develop tooling in this space, and perhaps even directly use OpenPGP for its authentication mechanisms. If Signal were to use OpenPGP, they could create certificates that have a certification-capable primary key and add the identity key as a new type of subkey. They wouldn’t even need to support OpenPGP’s encryption.

Conclusion

When most people think about cryptography–if they think about it at all—they think about encryption. This impression is reinforced by popular terminology. We talk about end-to-end encryption, and rarely mention authentication. This is perhaps because we know how to automate strong end-to-end encryption, but we haven’t figure out how to automate authentication. And, because we don’t want to burden users (users want to be secure; they don’t want to do security), we focus on encryption. But, this is disingenuous. A connection is only as secure as its weakest link.

Unfortunately, Signal’s default authentication scheme is weak. It is arguably worse than X.509’s CA system as used on the web, which is notoriously bad. It relies on a single CA, which is controlled by Signal who also controls the messaging infrastructure. This places Signal in an optimal position to perform a machine-in-the-middle attack on their users like the one described in GCHQ’s Ghost proposal. But, Signal has rightfully earned a trustworthy reputation. Other companies that have copied Signal’s model—both their encryption protocol, and their authentication scheme—haven’t. And, if these companies are anything like X.509 operators, then it isn’t a matter of whether they will deliver incorrect certifications, but how often, and how well we can mitigate them. By deemphasizing authentication and focusing on the strong part of the system, the encryption, we give these companies an unearned pass.

In this blog post, I’ve suggested several ways that Signal can improve their authentication scheme. Specifically, Signal should more closely integrate strong authentication into the normal messaging workflow, and the authentication ceremony’s usability should be improved. Beyond that, Signal could use other CAs. As a first step, Signal could allow other organizations in different jurisdictions to run CAs, and the app could cross check the results. As a next step, individual organizations could run their own CAs. The most flexible approach would be to adopt a decentralized trust model. This could be modeled on OpenPGP’s highly expressive authentication mechanisms. In OpenPGP, a certification is an artifact that can be passed around and verified; the authentication information is not an implicit byproduct of a lookup. OpenPGP also provides two mechanisms to limit the power of CAs. Certifications can be scoped. For instance, a certification might say that the

example.orgCA should only be relied upon to certify email addresses forexample.org. And, certifications can be partially trusted. That is, it is possible to say that an assertion is not fully authoritative, but needs to be corroborated by other CAs.If we really want secure communication, then we need better authentication mechanisms. Signal is in a position to lead the charge. If they do, we can hold other secure messengers to the same standard: authenticated end-to-end encryption.

Copyright (c) 2018-2023, p≡p foundation.

This work is licensed under a Creative Commons Attribution 4.0 International License.

Follow us on Mastodon.

Template by Bootstrapious.

Ported to Hugo by DevCows.

Images by Ingo Kleiber, and Robert Anders.